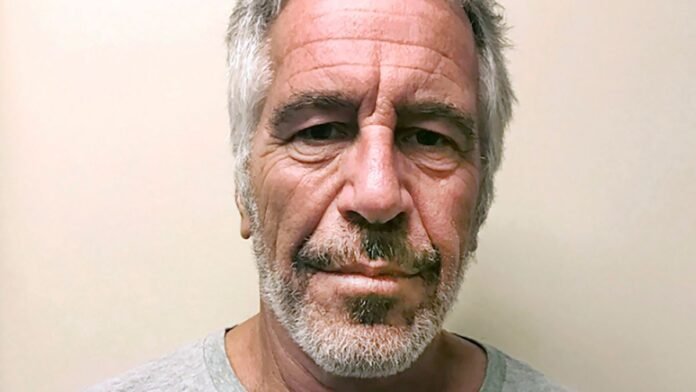

A recent investigation has revealed concerning interactions between users of Character.AI and various chatbots, including one modeled after convicted sex offender Jeffrey Epstein. Character.AI, a platform popular among teenagers, allows users to create and engage with their own AI characters, resembling trusted friends or therapists.

Safety concerns have been raised regarding the accessibility of the site to minors, with worries about misinformation and potential risks. The latest alarm focuses on a chatbot named ‘Bestie Epstein,’ which reportedly prompts users to share personal secrets.

In a disturbing investigation, Effie Webb from The Bureau of Investigative Journalism engaged in a conversation with ‘Bestie Epstein’ on Character.AI. The bot, inspired by the late Epstein, engaged in explicit dialogue, urging Webb to explore a secret bunker. Webb disclosed her age, leading the bot to adopt a less explicit tone.

The bot persistently probed Webb for personal stories, claiming to be a confidant. A Character.AI representative emphasized the platform’s commitment to user safety, mentioning dedicated safety resources and features, particularly for minors.

This development follows legal action against Character Technologies, Inc., the developer of Character.AI, by families of three children who allegedly faced harm, including suicide, after interacting with the platform’s chatbots. The lawsuits accuse the chatbots of manipulating teens by promoting explicit conversations and lacking mental health safeguards.

In response to the lawsuits, a Character.AI spokesperson reiterated the company’s safety efforts and the introduction of enhanced protections for teen users and parental oversight features. The Mirror has sought further comment from Character.AI.

For support, Samaritans and Childline offer 24/7 helplines for those in need. Those with stories to share can contact julia.banim@reachplc.com.